Community Notes Might Not Be As Successful as X Claims They Are

X has given users the power of crowd-sourced fact-checking, and here’s how it’s going

In January 2021, X, then known as Twitter, launched Birdwatch, a crowd-sourced fact-checking initiative to give users the power to decide what’s misleading or false on the platform– and call it out. When Elon Musk took over Twitter in October 2022, he rebranded Birdwatch to the now infamous Community Notes.

Musk has long touted Community Notes to be the fool-proof solution for misinformation on X, even calling it the “best source of truth on the internet”. A preliminary analysis of Community Notes data made publicly available by X shows that it might not be as effective at curbing misinformation as it was originally set out to be, and here’s why.

How do Community Notes work?

AAs part of this project, existing X users can apply to be contributors for Community Notes. After receiving approval, contributors start by first rating other notes and eventually transition to writing notes of their own under posts they deem misleading. X then uses a bridging algorithm, requiring other contributors from opposing viewpoints to agree that a note is helpful. Once a note receives a helpful rating from enough contributors, it is displayed under a post for all X users to see.

Contributors have two main functions: rate notes written by other contributors, or write notes themselves. The former involves rating a particular note as “helpful”, or “unhelpful”.

Contributors also have the option to classify a post that they think is misleading into specific categories.

While this seems like an effective way to make platforms more democratic and effectively curb misinformation online, it has its own pitfalls.

Far from a perfect system

An analysis of over 1.6 million Community Notes written on X from when the program was first introduced to the platform showed that contributors often do not end up reaching a consensus about the usefulness of a note, i.e., a note that has not received enough ratings to be categorized as helpful or unhelpful by X’s algorithm.

Contributors often do not end up reaching a consensus about the helpfulness of a note which means that the note gets a status of “needs more ratings” till a consensus is reached. Because of this, a misleading post, in many cases, does not end up getting a note at all.

Heather Smith, 31, a receptionist based in Scotland says they participated in community notes as an effort to “be a force of good for the people remaining (on X),” but have often encountered “right-wing rabbit holes” and other misinformation on the platform. Smith rated notes around political issues and other posts they deemed to be harmful.

One of the broadest criticisms for Community Notes and X also stems from the company’s decision to conduct a slew of layoffs among its trust and safety teams, paid moderators who were responsible for keeping the platform safe. These have now been replaced solely by Community Notes. “Personally, I think Community Notes are a poor replacement for actual paid moderators,” Smith said, while adding that they were frustrated by the “dangerous misinformation” present on the platform.

The status of a note keeps changing constantly because of the algorithm X uses to give more and more contributors the chance to rate said note. Therefore, a note that was initially deemed helpful by the community might not end up being displayed on the post.

A note not gaining a helpful or unhelpful status means that a potentially misleading or misinformed post could remain unchecked for a long time and spread to users all over the platform.

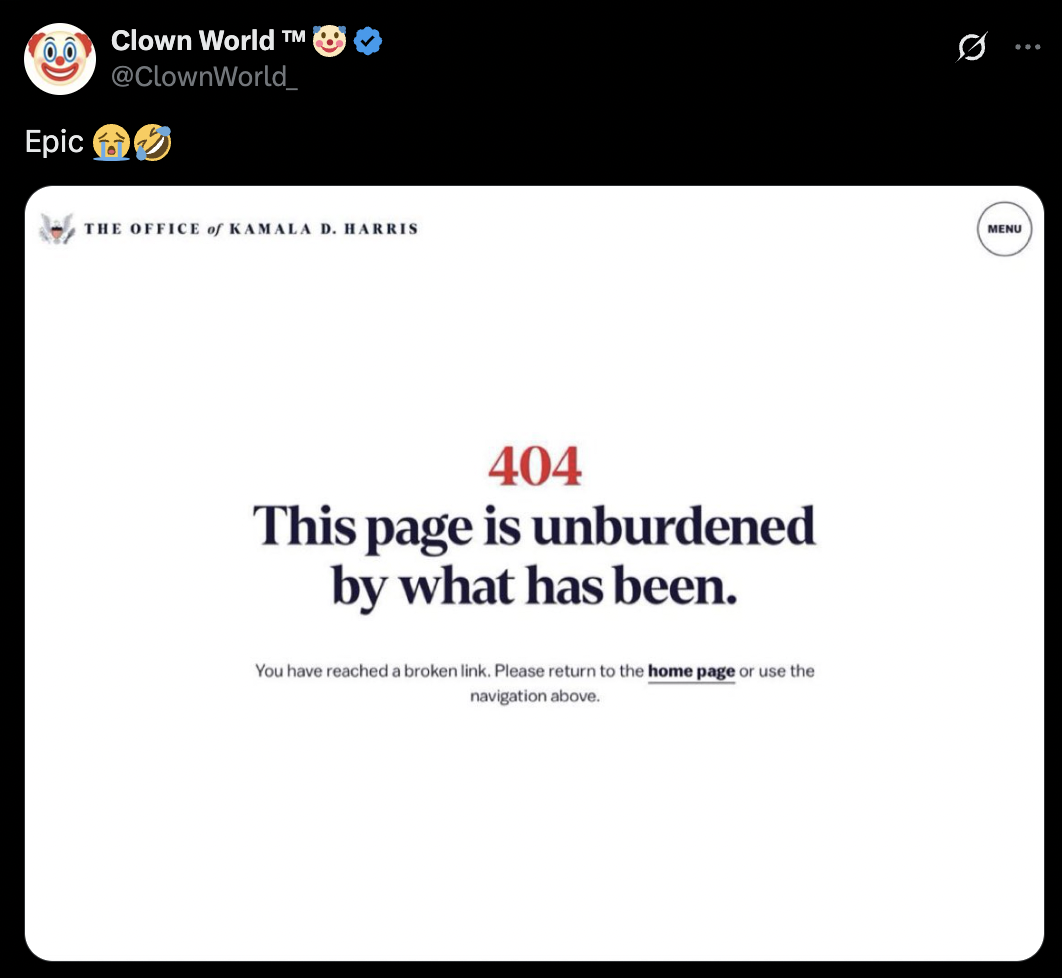

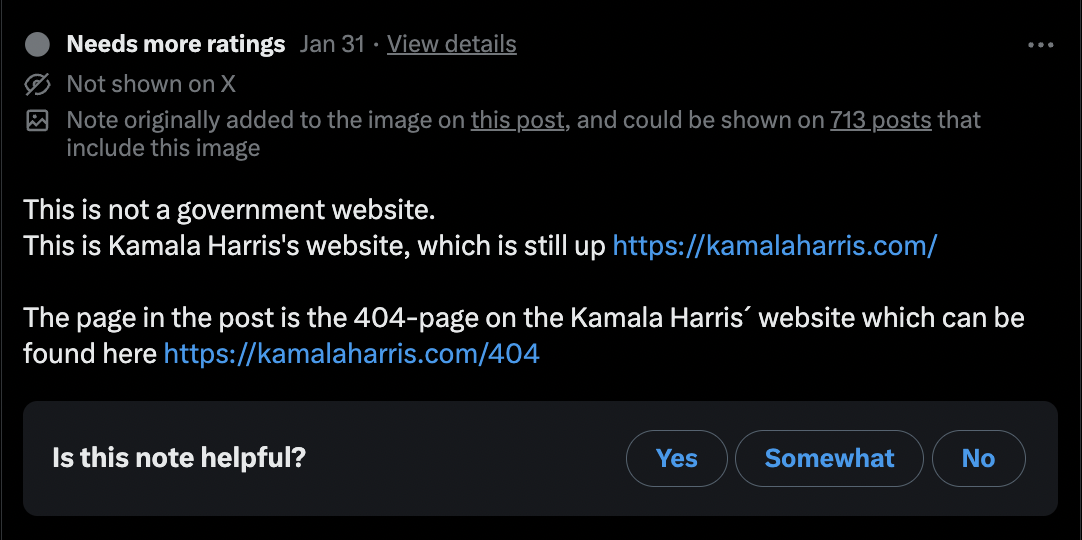

A user made this satirical post claiming to show Kamala Harris’ website being down shortly after the election. The post claimed that Harris’ page displayed the error, “This page is unburdened by what has been”, a phrase used as a dig at the presidential candidate who had used the same expression before in an election rally.

While the post was meant for satire, several people in the comments asked that the intern who made the website get a raise, while others replied with a screenshot of the actual website, which was indeed functional.

A community note was also proposed below the post laying out the facts. But the note was downvoted and is currently not displayed to all users, some of whom were seen questioning the reality of the post in the comments.

Addressing the effectiveness of Community Notes, Keith Coleman, Vice President of Product at X wrote in a 2021 blog post, “We believe this approach has the potential to respond quickly when misleading information spreads.”

Current data shows this is not the case entirely. My analysis found that many potentially misleading notes have been stuck in the “needs more ratings” status for months without any consensus being reached on whether they are useful or not.

Even when a note does end up being displayed under a post, it is often too late. Data shows that there is a huge disparity between the views of a misleading post and the views its corresponding community note has. This could be because the note is added later and the post has already reached the maximum number of people or that users don’t click on the note to read it at all.

While theoretically Community Notes seem like a great idea to curb misinformation online, experts have agreed that it does not translate that well when implemented.

Heather Smith says they have migrated to Instagram and use X less than before, despite noticing some of their rated notes being displayed under posts. “It’s not my job,” they said. “It should be someone’s job but Elon Musk would rather not pay people to do actual moderation,” they added.